Yesterday at Biblithèque Nationale de France, I took part in a panel discussion on longue durée in history, organised by the Revue Annales – Histoire et Sciences Sociales. Of course I am not a historian, and I wouldn’t be able to tell whether one interpretation of longue durée is better than another. But historians are now raising questions that are common to the social sciences and humanities more generally: how to benefit from big data and how to re-think the political engagement of the researcher. So I was there to talk about big data and how they change not just research practices and methods, but also researchers’ position relative to power, politics, and industry. This questions cross disciplinary boundaries, and all may benefit from dialogue.

What ignited the historians’ debate was an attempt by two leading scholars, David Armitage and Jo Guldi, to restore history’s place as a critical social science, based on (among other things) increased availability of large amounts of historical data and the digital tools necessary to analyze them. Before their article in Annales, they published a full book in open access, the History Manifesto, where they develop their argument in more detail. Their writing is deliberately provocative, and indeed triggered strong (and sometimes very negative) reactions. Yet the sheer fact that so many people took the trouble to reply, proves that they stroke a chord.

What do they say about big data? They highlight the opportunity of accessing large and rich archives and to expand research beyond any previous limitations. Their enthusiasm may seem excessive but it is entirely understandable insofar as their goal is to shake up their colleagues. My approach was to take their suggestion seriously and ask: what opportunities and challenges do data bring about? How would they affect research, especially for historians?

My first answer to these questions is that in itself, today’s hype around data offers an opportunity to enhance the role of historians in society. They are best placed to remind us that even though common discourse often presents data as a specificity of today’s digitisation, scientists and policy-makers have always used (some) data to draw conclusions on important social issues, and to inform decisions. Since antiquity, governments used census to understand and control population movements, and to plan policy actions. Statistics as a discipline arose in the seventeenth century to guide states (whence its name), and gained ground through its progressive integration into the scientific method. The long run is the relevant perspective to understand the place of data in society: the specificities of today need to be compared and contrasted with this rich history.

This is not to deny that today’s big data bring about specific challenges, and in this sense they differ from (to take the same example) census. Some of these challenges are methodological, and will directly affect the work of the historian who wants to use them. First, “big” does not mean “exhaustive”, and most “big” datasets consist of biased samples with (potentially many) missings. This is because they rarely result from a deliberate attempt to collect data for scientific purposes, so that there is no effort to ensure (for example) statistical representativeness. Another alleged advantage of big data is their high resolution: in some cases, there are observations every second or even fraction of a second. This might seem well-suited to the needs of historians (or anyone interested in the temporal dimension of phenomena), but in fact, the ensuing high dispersion and volatility often require, ironically, to reduce the data to a more aggregate, less detailed level. Most importantly for historians, long-term comparisons may be problematic to the extent that there is rarely any methodical planning of data collection exercises, the technical devices in use evolve over time, and preservation of older formats is not systematically ensured. These (and other) obstacles are not insurmountable, but they have to be taken into account, and solutions must be devised.

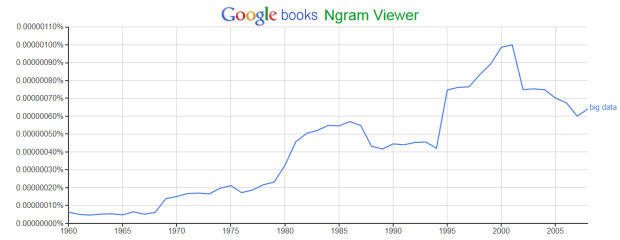

Beyond methods, other challenges involve the independence of the researcher and the relationship to economic and political power. Big data are often the private property of companies, for which they constitute key competitive resources, in ways that discourage their release to outside users, including researchers. Tools such as Google Books and Ngram Viewer seem to be popular among historians (or at least, they are pointed to by Armitage and Guldi): but what if Google decides, one day, to discontinue the service? Or even just to keep secret the gaps in their corpus, or the limitations of their text-searching algorithms? After all, Google is a private business that has every right to make decisions for its own interest – which may or may not be aligned with the interests of public research. Another source of potential misalignment is the involvement of political or military power. Data are of obvious (and growing) interest to intelligence services, as the Snowden scandals of two years ago abundantly proved; these actors have resources that may heavily influence the production, release, use or analysis of data. Again, the production of public knowledge is not their priority. This is not to say that nothing can be done, or that social scientists and historians should stay away from any risk associated to big data; but that they need a profound reflection on their place in the polity, their relationship to power and their stance towards economic interests.

Finally, what material conditions should be in place for researchers in the social sciences and humanities (and more specifically, in history) to engage with big data? The problem is that few of them have been trained in data analysis, statistics and computing. On the one hand, teaching programmes will have to change, to equip future researchers with the tools they will need. On the other hand, today’s researchers may benefit from collaborations across disciplines – so that what the historian cannot do, will be done by a co-author from computer science, and vice versa. Unfortunately, few scientific institutions actually reward trans-disciplinarity in terms of recognition and career progression, even though they all pay lip service to it; and in many areas of the humanities and social sciences, co-authorship is still frowned upon. Removing these barriers is essential to tap into the potential of big data.

3 Comments