Contemporary debates on AI and labour often focus on quantitative impacts, such as potential job losses. Yet, the qualitative question of how AI reshapes the experience of work is equally critical. A recent open-access book chapter that I co-authored with Antonio A. Casilli examines these transformations, highlighting their already tangible, if not yet final, effects on job quality.

The analysis starts with AI deployment, that is, the introduction of AI solutions into the workplace – for example, use of automated transcription tools. It is typically framed as a productivity enhancer that can improve job quality by making work more engaging – and in some cases, even safer. However, critics argue that firms often use AI to intensify labour through tighter managerial control and surveillance. In this scenario, AI may degrade job quality by restricting autonomy, reinforcing algorithmic management, and limiting workers’ ability to negotiate their roles or pace.

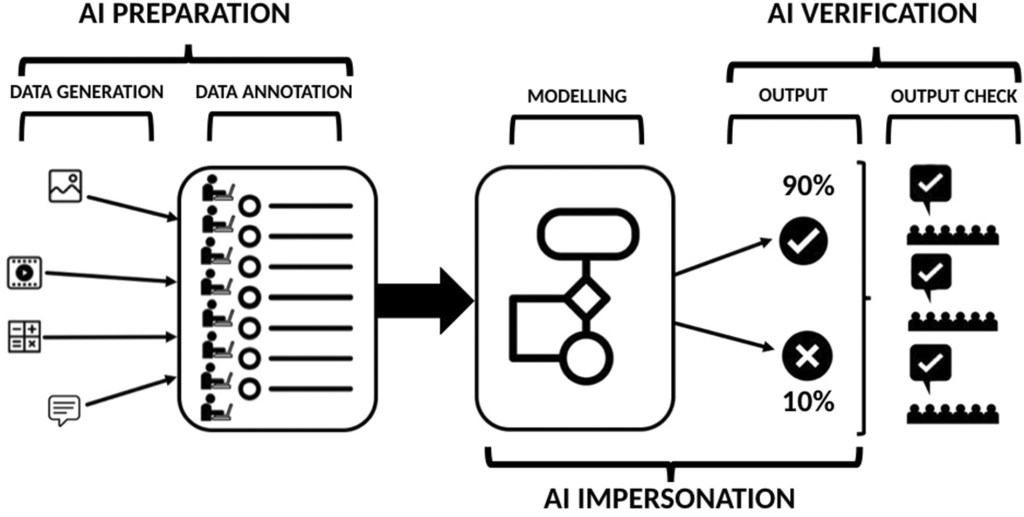

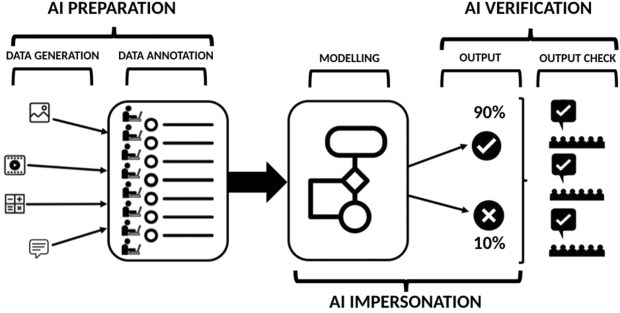

But before AI tools reach the workplace, they must be designed, built, and marketed. Today’s AI production relies heavily on machine learning, which requires vast datasets. This process involves extensive “data work,” performed by myriad lower-level workers in three key functions: preparation (data generation and annotation), verification (quality control of AI outputs), and impersonation (manually performing tasks meant to be automated). While this process increases labour demand the quality of data work is often poor, with low wages, job insecurity, and misrecognition of skills. The root of these issues lies in labour platformization.

To see this, let’s first take a step back and consider that most data work is procured through outsourcing (shifting lower-value functions outside the firm) and offshoring (relocating work to lower-wage countries). These practices exclude externalised workers from the resources of lead firms, creating a “planetary market” where AI companies in the US and Europe source labour from countries like Venezuela or Madagascar. Digital platforms amplify these trends, enabling on-demand outsourcing to precarious micro-providers globally. Platformization thus exacerbates the negative effects of outsourcing and offshoring, degrading job quality.

Platformisation is best understood at the crossroads of two broader socioeconomic tendencies. First, the “fissured workplace“, where companies market goods or services without employing all those who contribute to their design, assembling or delivery. This model heightens insecurity, as metrics and surveillance systems erode autonomy and increase stress. Offshore platform workers, in particular, face isolation, losing traditional workplace camaraderie and support. Second, “dispersion“, the constant need to manage interruptions, multitask, and prioritise competing demands. AI-driven tools, such as real-time scheduling algorithms and productivity trackers, intensify dispersion, blurring boundaries between professional and personal life and increasing mental strain.

Overall, then, the effects of AI on job quality are mixed. While AI deployment can enhance productivity and safety, it often intensifies labour and undermines autonomy. Meanwhile, AI production creates jobs, but these are frequently precarious and low-quality. Understanding these dynamics is essential for shaping equitable futures that leverage the potential of contemporary technologies while safeguarding labour rights and well-being.

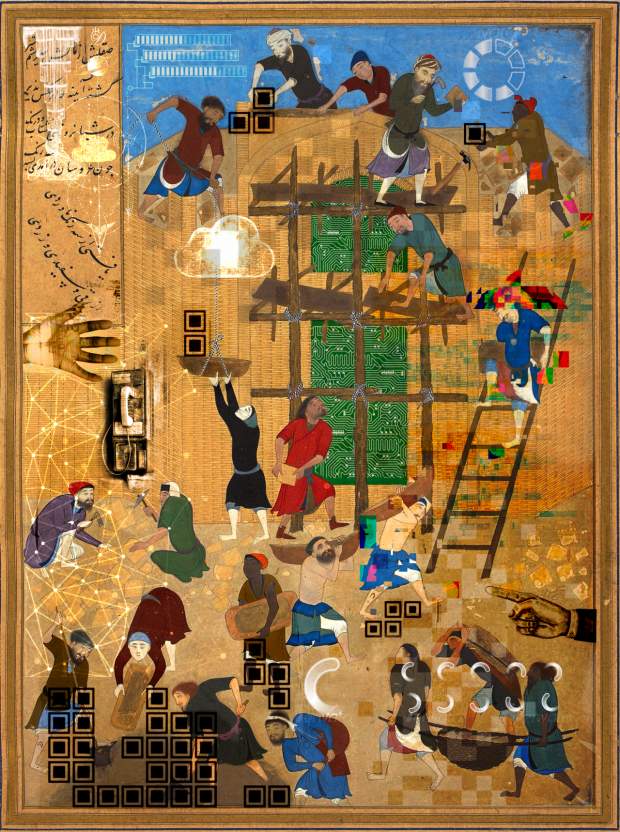

This is not a story of technological determinism. AI alone does not destroy jobs or degrade their quality; rather, its impacts stem from the broader economic forces that shape its presence and role in today’s economies. The above artwork captures this idea by foregrounding humanity’s collective endeavour in building artificial intelligence, drawing inspiration from Persian miniature painting. In sum, the future of work in the age of AI will hinge on political choices about production organisation and the distribution of its benefits and costs.

The book chapter can be found as: Casilli, A.A. & Tubaro, P. 2026. “What is AI Doing to Job Quality? Platformization, Fissured Workplaces and Dispersion”. Chapter 3 in A. Piasna & J. Leschke (eds.) Job Quality in a Turbulent Era. Cheltenham, UK: Edward Elgar Publishing, pp. 45-60, https://doi.org/10.4337/9781035343485.00009

A video of the launching event of the book – including my presentation of the chapter – is available here.