Yes I must admit it: I didn’t keep my new-year-2015 promise of posting more often on my blog… and the annual report I received yesterday from WordPress, showing a couple of peaks of activity and frigthening silence the rest of the year, isn’t something I would be proud to share… but I have a justification! Seriously, it’s not just an excuse – it’s that I’ve been busy trying to change life… and yes, I managed. On Monday 4 January, I’ll start an exciting new position as senior research scientist at the National Center of Scientific Research (CNRS, or in French, Centre national de la recherche scientifique) in Paris. CNRS can be loosely compared to what is, in other countries, a National Research Council, but there’s more to it than international comparisons might vaguely suggest: this is probably the single most desired job in French academia, with a mission “to contribute to the development of knowledge… in all fields that contribute to the advancement of society“. In plain words, that’s basically pure research with almost no teaching apart from some PhD supervision… a dream that would hardly be possible in the UK, where I was before.

Yes I must admit it: I didn’t keep my new-year-2015 promise of posting more often on my blog… and the annual report I received yesterday from WordPress, showing a couple of peaks of activity and frigthening silence the rest of the year, isn’t something I would be proud to share… but I have a justification! Seriously, it’s not just an excuse – it’s that I’ve been busy trying to change life… and yes, I managed. On Monday 4 January, I’ll start an exciting new position as senior research scientist at the National Center of Scientific Research (CNRS, or in French, Centre national de la recherche scientifique) in Paris. CNRS can be loosely compared to what is, in other countries, a National Research Council, but there’s more to it than international comparisons might vaguely suggest: this is probably the single most desired job in French academia, with a mission “to contribute to the development of knowledge… in all fields that contribute to the advancement of society“. In plain words, that’s basically pure research with almost no teaching apart from some PhD supervision… a dream that would hardly be possible in the UK, where I was before.

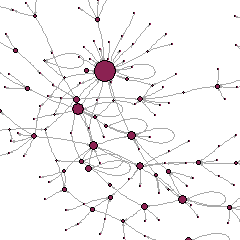

I’ll be at the Lab for Computer Science (LRI, Laboratoire de Recherche en Informatique, UMR8623) on the Saclay  campus, and I’ll work with the A&O (Learning and Optimization) research team. The interesting thing is that mine is an interdisciplinary position, designed to facilitate dialogue and collaboration between the social sciences and computer science around big data and their use for the advancement of knowledge, policy, and more generally society. I have been especially selected by the sociology section of CNRS to work in a computer science research centre. There, I am asked to develop my personal, long-term research project on the “sharing economy” of digital platforms and how they create value from the social ties in which economic action is embedded: this will require blending my research on data, social networks and the digital economy with machine learning and optimization approaches (more on this later … yes on this blog! promise!).

campus, and I’ll work with the A&O (Learning and Optimization) research team. The interesting thing is that mine is an interdisciplinary position, designed to facilitate dialogue and collaboration between the social sciences and computer science around big data and their use for the advancement of knowledge, policy, and more generally society. I have been especially selected by the sociology section of CNRS to work in a computer science research centre. There, I am asked to develop my personal, long-term research project on the “sharing economy” of digital platforms and how they create value from the social ties in which economic action is embedded: this will require blending my research on data, social networks and the digital economy with machine learning and optimization approaches (more on this later … yes on this blog! promise!).

What else will I do this year at LRI? I am in the organising committee of the Second European Social Networks Conference which will take place in Paris next June, I am finishing a book on so-called “pro-anorexia” websites as the conclusion of my past project ANAMIA, and I am in the Editorial Board of Revue Française de Sociologie.

What else will I do this year at LRI? I am in the organising committee of the Second European Social Networks Conference which will take place in Paris next June, I am finishing a book on so-called “pro-anorexia” websites as the conclusion of my past project ANAMIA, and I am in the Editorial Board of Revue Française de Sociologie.

I won’t entirely forget England though… I’ll keep my doctoral students at Greenwich and continue my engagement at UCL’s Institute of Education as external examiner. Come on, you can’t just disappear after six years! Indeed, I’ll always remember those six years as most productive and fulfilling ones. And however happy I am now to join CNRS, I’ll never forget the expressions of love, sympathy and friendliness I received from colleagues and students when I left Greenwich in December. The cards, the presents, the parties… all beyond any expectations I might have had before! Thank you Greenwich. And well, yes, a big thank you to all those who made it possible – both those in London who made me have a great time far from home for so long, and those in Paris who helped me come back, not without effort, and have welcomed me now.

A great new year is about to start, and I promise I’ll document it more… 😉